i have been working on skin color tracking software as one part of my diploma thesis (actually it is the first part 😀 ). i think it is working fine… you can calibrate it in real-time for any color. i am going to use it for skin color tracking, but i figured that it would be nice to have something like this in case light conditions change in the room… so you just recalibrate your hand and continue to work…my whole diploma thesis is going to be opensource… so i am putting this work in progress code here as well (yes, it is coded in Python and yes, i am using OpenCV)

in short what i am doing…

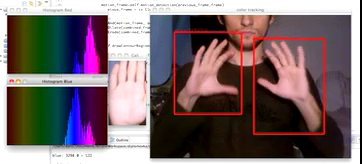

first there is a calibration (just hit “c”) and place your desired color (hand) to the rectangle.from now on, two histograms were created to represent your color with blue and red channel in YCbCr color space, which seems like the best solution for skin color tracking.

from now on, there is color segmentation running along with motion detection. regions are determined by binary adding these two images together. i assume that anything can move, but only moving skin-colored segments are the output… so please in case you can see your head in camera, don’t move it that much :Dthis combined segmentation is then put into contour detection and after filtering of some kind i show couple of them that fit. mostly just hands, sometimes neck… but in case i use some bright colored object to calibrate, i can have almost 100% satisfaction.

some pictures:

light just from the behind:

additional front light:

both scenes (with turned off and on front light) had very bad light conditions and my webcam is poor as well… but even with all this, i was able to achieve pretty good skin recognition. it is all about good calibration 😉

video (turned off backlight, only small frontlight used):

in case of moving your head a lot, you can get false positives, this is not prevented so far… also when you stop moving your hands, this version will stop following them.

known bugs:

– for some reason my windows act weird and after second calibration you get on both histogram windows the blue channel, but it is stored right in memory… just like the hand capture window can get black parts, probably because of my slow computer, but again it is represented correctly in memory

in next version:

– i would like to use “intelligent decider” that will detect if hands are on screen, but are not moving… so it will not use motion detection for these frames, but only color segmentation

– also i would like to have only two blobs as a result, which will prevent false positives, but this is for further discussion, because i might not need such match…

next step in my diploma thesis:

– use 2 cameras and from these results determine hand position in 3D

Pingback: finger detection - dendroid